Shows all the information Google gets from just one photograph, using Ai.

Best part? The description supplied here is probably a limited version of all the information that Google infers from each of your photographs. It would make sense to ask for a short 3 paragraph summary of key observations to fit within API limits. On Google’s end? No reason for such limits to exist. So they infer even more from your data than this website can show. And they run this kind of compute on everything you give them.

They “claim” that they don’t sell or share this data. Do you trust them?

You might say you have nothing to hide, but you also don’t get to control the shifting definitions of what’s acceptable. Today you’re fine. Tomorrow you’re labeled a political dissident because of the evidence of Wrongthink that Google happily supplied to the government without your knowledge. Especially in light of the incoming administration, this is an important discussion to have.

Here is a list of FOSS Google Photos alternatives. Immich looks particularly good to me.

Immich looks particularly good to me.

It is! Been running it for a few years now and I love it.

The local ML and face detection are awesome, and not too resource intensive — i think it took less than a day to go through maybe 20k+ photos and 1k+ videos, and that was on an N100 NUC (16GB).

Works seamlessly across my iPhone, my android, and desktop.

Immich is great can reccommend. Backup text2image search timeline location map, all self hosted.

can someone explain what this website whats to proof? Why would I upload my private images to some website? would that be as stupid as using google photo in the first place?

Obviously, don’t upload any photos to the demo site that you wouldn’t want shared. That’s pretty basic internet 101. The point is to demonstrate the amount and types of information Google infers from its users’ data. So feed it a pic you don’t care about, or try with the supplied images.

Yeah I’m not sure how trustworthy it is either.

Ente is trying to be an end to end encrypted version of GooglePhotos.

It was made by the creator of ente which is a free (5 GB) open source alternative to Google Photos. There are paid plans for more storage.

The creator was a Google developer who left after he found out Google was helping the US military train drones with AI.

I got an unimpressive, repetitive description of the photo I tested. While it was detailed and accurate, there was nothing revealing about it.

So it’s an llm description of the photo? And a printout of the exif data? I’m not sure what this is trying to prove

It’s a bit of a parlor trick with one photo but ML/LLM are about quantity. Imagine this kind of classification, data collection on all 100k of your photos. Now it’s calculating that you redid your kitchen in 2020. You had a Toyota but now you drive a Mercedes. You prefer cats to dogs. You typically wear [insert three colors] tshirts and always wear jeans.

All it needs is more and more datas to start to be obvious.

deleted by creator

It looks like the prompt is something like: look at this image and tell me information about the subjects class, race, sex, and age. Give specific details about facial features/expressions, clothing and accessories. Try to determine details about the location and season.

I gave it a screenshot of a selfie I just sent to my wife after a haircut. It was about 60/40 on the details. I could see where the 40% went wrong.

Mildly interesting, nothing to write home about.

I think the 98% of people not in nerdy privacy communities like ours would be shocked about this so it is something to write hone about.

I mean, i’m into AI, and I think it’s cool. But it didn’t peg me as pasty white. It thought the parking lot was empty when it was full, It saw “reflections” in my glasses which were parking spot lines refracted. It made me feel lower middle class because I wore a collarless shirt.

The only thing it really nailed was, it’s winter, I’m ~ middle aged, a guy and wear glasses. At current it’s not breaking the guess who game :)

It would legitimately not surprise me at this point if Google starts serving precise bra ads to your girlfriend after discerning cup size from her nudes.

Removed by mod

deleted by creator

My android phone did not strip away the metadata. It not only identified what type of phone I was using but also the exact time and date each photo was taken.

deleted by creator

Stripping metadata is up to the website / app, not the OS. Many apps use metadata, some don’t. If they don’t need the metadata and decide to do the right thing, then they’ll strip it.

Also upload my Android photos to Ente Photos and the metadata is preserved (thankfully).

deleted by creator

AFAIK that would mean ChatGPT and each website (not Firefox) would decide that itself. Firefox doesn’t do it since a website may need it.

It wouldn’t need to be proprietary. The logic could just be “remove everything”. Not sure how the Google Inc thing appeared.

Not working for me at all, with my photos or with the samples provided by the site

I always get a variation of the same thing:

The image shows a pattern of alternating dark green and light green vertical stripes. There is no discernible background or foreground beyond the repeating pattern itself; it’s an abstract design. There are no objects or spatial depth present in the image.

The image does not depict any people, emotions, racial characteristics, ethnicity, age, economic status, or lifestyle. There are no activities taking place.

Your browser is most likely blocking HTML canvas data. Uploaded photos will often look like colored vertical lines.

There is a privacy setting in firefox that causes this for me on most websites that require photo upload, not all sites, but consistently the same sites.

Ebay for instance, most reverse image searches etc.

in about:config - > privacy.resistFingerprinting

It might not be that setting specifically, but turning that setting to “false” does fix this for me.

There might be a more granular setting that does the same job but i don’t know of it.

Not that i’m recommending turning that off, that’s your call.

I’ve also not tried it on this site specifically.

privacy.resistFingerprinting

There are eleven settings that start with “privacy.resistFingerprinting”. The first one, which only says “privacy.resistFingerprinting” is set to False by default and I still get the colored vertical lines.

In my setup only three of them are altered from the default values.

- privacy.resistFingerprinting

- privacy.resistFingerprinting.block_mozAddonManager

- privacy.resistFingerprinting.letterboxing

“privacy.resistFingerprinting” is the one i was talking about specifically, which works for me in the scenarios detailed in my response.

It’s been a while since i setup this install but i know i used the arkenfox scripts as a baseline.

I have no idea how much deviation i have for the default baseline so YMMV greatly.

I only mentioned that setting because it’s one i use to fix my specific problems and it might help as a starting point.

I get that too so rapidly lost interest

Now you need even an AI to saying what you see on a Photo?

What’s up with this website popping in my feed for the 6th time in less than a week ?

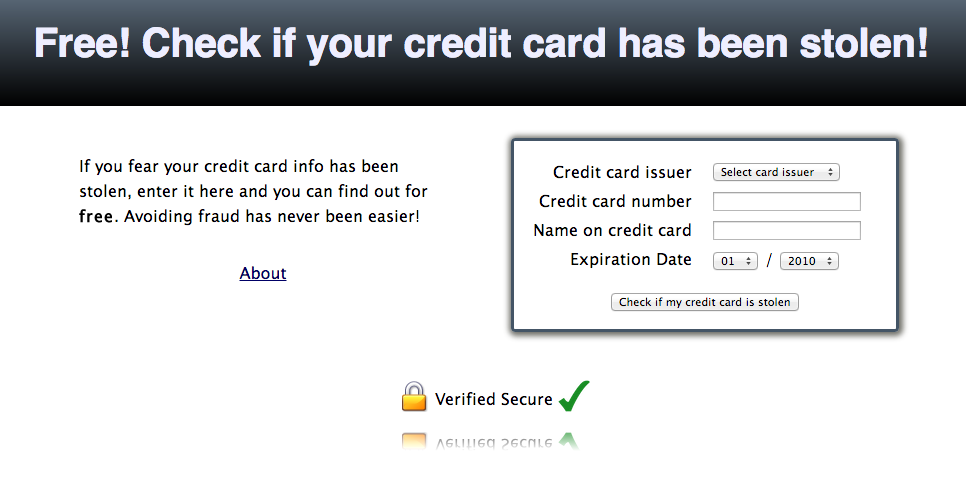

Edit : nevermind, after digging the website for a grand total of 5 seconds, it appears to be an advertising website for Ente (which has a paid plan besides being self hostable). That’s shitty marketing from them if you ask me

deleted by creator

From a quick search on my instance, I could find 3 posts that are still up, and I could also find specific comments I remembered from a post that got removed since.

That’s at least 4 occurrences on Lemmy alone

I did not criticize people sharing it here, but rather Ente themselves for making vague fear-mongering claims for viral marketing purposes