☆ Yσɠƚԋσʂ ☆

- 16.8K Posts

- 12.3K Comments

9·18 hours ago

9·18 hours agoI actually do expect that we’ll see more overland integration of Eurasia happening precisely because of these kinds of tactics.

10·18 hours ago

10·18 hours agoI guess this is what he meant by joint operation of the strait. 🤣

11·18 hours ago

11·18 hours agoYup, and we already saw how vulnerable the US is during the tariff wars.

10·1 day ago

10·1 day agoWhat I expect will happen is that the yuan will become a de facto reserve for the simple reason that China is the world’s factory. What made the petrodollar work was that everybody needs oil, and since you could only buy oil in dollars, countries had to constantly get dollars to buy oil. Similar logic applies with yuan since if you hold it, then you can always convert it into something you need from China. This makes it a safe currency to hold.

10·1 day ago

10·1 day agolmfao you suggesting fascists don’t control the means of production in the west currently, who do you think controls them exactly?

12·2 days ago

12·2 days agoDenmark isn’t the primary contradiction of our times. The significance of Iran being able to challenge US media power globally should not be understated. This is the first time in history that the US completely failed to control the narrative on the war out of the gate even in the west. And AI tools are what made it possible for Iran to run their own effective propaganda that connects with people in the west. Traditionally, the US was able to leverage its vast media industry, with artists, designers, 3d modellers, script writers, and so on, who’ve honed their skills in Hollywood to run propaganda campaigns that could not be equalled. Now, a small team in Iran is able to put out media that’s just as effective at capturing public attention. This is absolutely a revolutionary development.

17·2 days ago

17·2 days agoThe fact that even BBC feels the need to talk about it, shows how effective Iranian media game is. :)

48·2 days ago

48·2 days agoIt’s notable that these Lego movies have so much momentum that even BBC had to cover them. Iran is absolutely killing it with their media game.

13·2 days ago

13·2 days agoThe neat part here is that the energy shock hasn’t actually hit yet because tankers move at around the speed of a bicycle. The ones that left before the strait was closed are still on route. When the oil and gas they carry is gone and the next shipment fails to arrive is when real fun starts at the pump.

3·3 days ago

3·3 days agoFor sure, I found it kind of a depressing read too, but it’s such great analysis of how the media actually works.

6·3 days ago

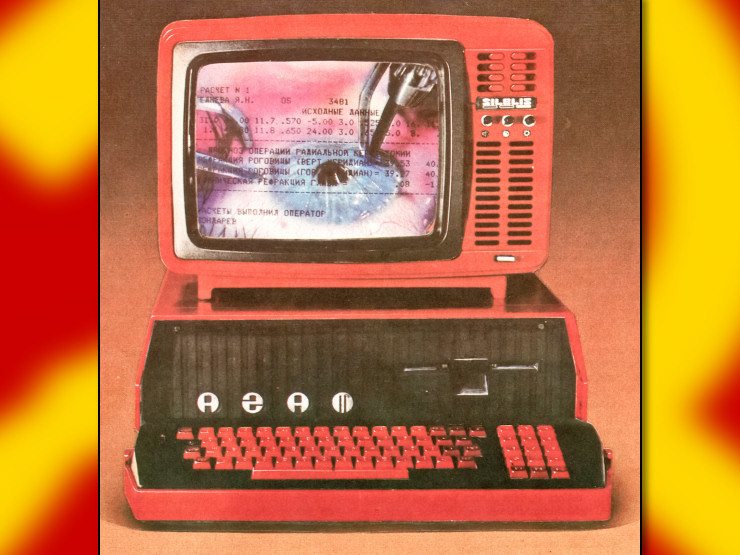

6·3 days agoChina is just playing factorio IRL

4·3 days ago

4·3 days agoThanks, and the discussions we’ve had here was the initial motivation for the article. We have to engage with the media from all sources, but that doesn’t mean consuming it blindly.

7·4 days ago

7·4 days agoI’m just going to shamelessly plug my explanation of dialectics :) https://dialecticaldispatches.substack.com/p/the-toolset-of-reality

42·4 days ago

42·4 days agoSo like absolute win for Spain then?

4·4 days ago

4·4 days agoSeems that even France wasn’t confident they’d get their gold back. What they actually did was sell the gold in the fed and bought new gold in Europe.

9·5 days ago

9·5 days agoI can’t see the US allowing this to happen either.

26·5 days ago

26·5 days agoI’m just hoping the EU collapses before that can happen.

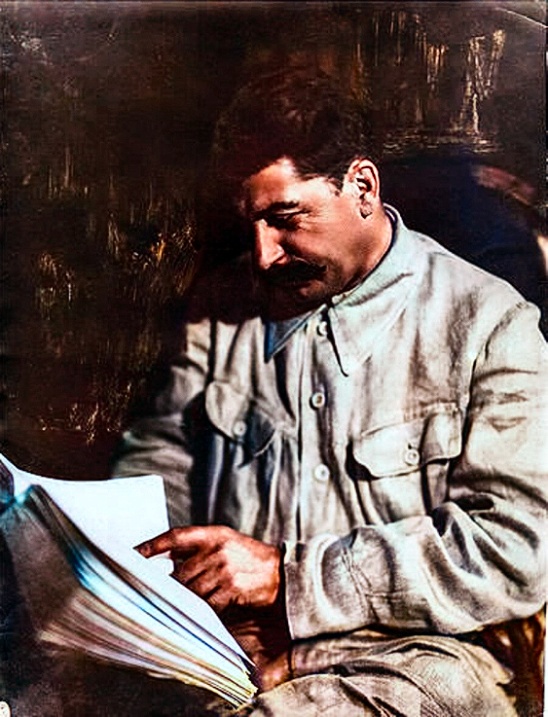

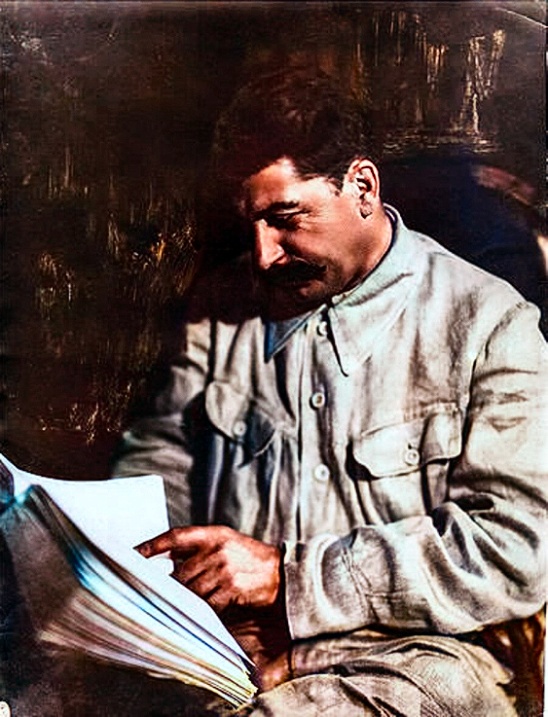

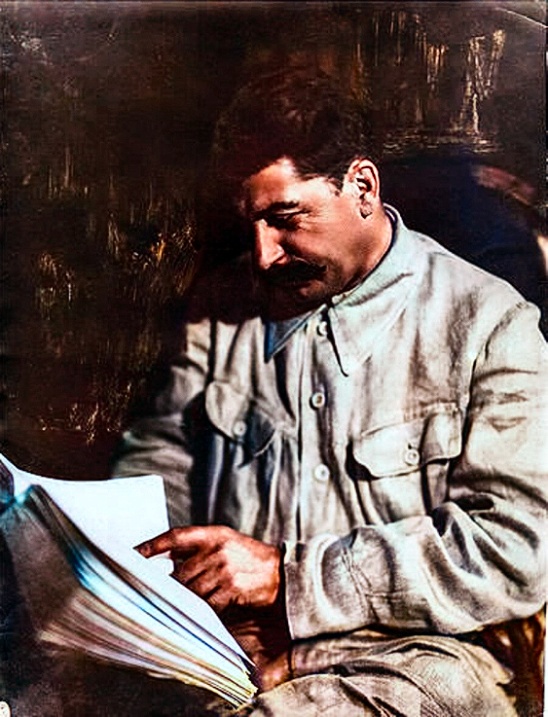

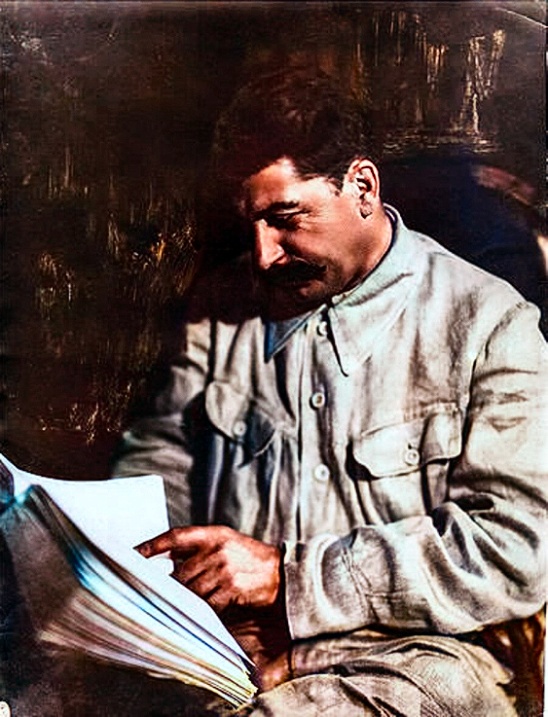

571·5 days ago

571·5 days agowestern propaganda is very effective

4·5 days ago

4·5 days agoMy bet is that it’ll restart before the two weeks are up. Lebanon is being bombed right now, and Iran is closing Hormuz in response.

That’s how China tends to do these things. They’ll give out an intentionally vague directive that can then be interpreted as the situation demands.