Lisp is so back

I predicted this. LLMs are like the language center of the brain and all they can do is spit some convincing word salad. You need actual division of labor. It’s really pathetic that current AI can’t properly crawl a bunch of web pages because they inherently have to digest all that code into the prompt/response stream.

Exactly, it’s like people got a hammer and everything looks like a nail. Like, yes, LLMs can be contorted to do a lot of different tasks, but that doesn’t mean they’re the optimal tool for accomplishing these tasks. It seems that as we’re starting to hit limits of what you can squeeze out of LLMs, the hype is starting to die down and people are rediscovering other well known techniques that can be combined with them.

So we’re back to expert systems with a sprinkling of LLM/Transformer architecture…

The tech bros will hate this because by its design it can never become “AGI”, since it’s applying the model to a hyper specific domain. Not that their architecture could ever actually achieve independent “intelligence”, but it’s easier to sell when the model is trained to appear as a generalized problem solver instead of a domain specific one.

I don’t get the impression that the goal is to apply the model to a hyperspecific domain, rather the idea seems to use a symbolic logic engine within a dynamic context created by the LLM. Traditionally, the problem with symbolic AI has been with creating the ontologies. You obviously can’t have a comprehensive ontology of the world because it’s inherently context dependent, and you have an infinite number of ways you can contextualize things. What neurosymbolics does is use LLMs for what they are good at, which is classifying noisy data from the outside world, and building a dynamic context. Once that’s done, it’s perfectly possible to use a logic engine to solve problems within that context. The goal here is to optimize a particular set of tasks which can be expressed as a set of logical steps.

That’s super cool, I’ve always thought that it was backwards that we’re using LLMs to add complexity to prompts. They should be used to reduce complexity by recognizing and factoring out patterns.

yeah exactly

Investors don’t want that. They want AI to use more energy, because in their minds that proves that it’s good.

Numba big, mean good!

and that’s why it’s important for this tech to be developed in open source, community driven fashion

I was just thinking about building a (much simpler) version of this for my HA voice assistant. Essentially have a sort of ‘world fact oracle’ it could call to make sure its giving correct information when I ask for unit conversions or something like that.

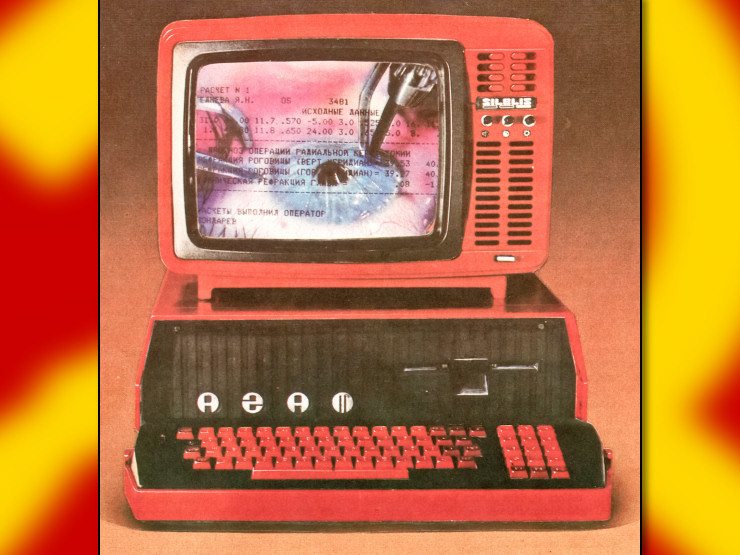

Hell yeah Prolog and Lisp Machines are gonna make a comeback

I really think that LLM coupled with a logic engine running in a REPL environment could be an amazing thing.

I definitely see the value in verifiable, programmable logic code as part of the LLM “thinking” loop, which I think is probably one of the more valuable discoveries of LLM usage.

Taking the embodied form of language (billions of parameters) and coupling it with some Prolog thing so that the “mental” logic is sound as oppose to linguistic interlocution could lead to interesting stuff.

I was always partial to the symbolic AI folks, they were just early in my book.

That’s my thinking as well. The LLM is basically an interface to the world that can handle ambiguity and novel contexts. Meanwhile, symbolic AI provides a really solid foundation for actual thinking. And LLMs solve the core problem of building ontologies on the fly that’s been the main roadblock for symbolic engines. The really exciting part about using symbolic logic is that you can actually ask the model how it arrived at a solution, you can tell it that a specific step is wrong and change it, and have it actually learn things in a reliable way. It would be really neat if the LLM could spin up little VMs for a particular context, train the logic engine to solve that problem, and then save them in a library of skills for later user. Then when it encounters a similar problem, it could dust off an existing skill and apply it. And the LLM bit of the engine could also deal with stuff like transfer learning, where it could normalize inputs from different contexts into a common format used in the symbolic engine too. There are just so many possibilities here.

I expect to see cool new repos at https://git.sr.ht/~yogthos/ in the near future comrade

haha if I come up with anything nifty, I’ll be sure to share here :)

so they will just build 100x more data centers

That’s the neat part, once you drop the cost of running these things sufficiently, you don’t need datacenters because people can just run the models locally. We’re currently in the mainframe era of AI, but the pendulum is already swinging towards personal computing as local models continue to improve. These kinds of breakthroughs are going to accelerate the process.

i am kind of pessimistic because i feel like the profit motive will impede the mass implementation of local models. it feel like all good web / tech things stay too niche to make a difference.

That’s just the Silicon Valley model though. Look at China for contrast. Companies there treat models as foundational infrastructure, and they’re not trying to monetize them directly. Hence why we see so much open source work coming out of there right now. It’s a similar situation we see with Linux incidentally. A lot of companies contribute to its development, but they monetize things like AWS that are built on top of it. However, even without company engagement, people will continue to work on open source as they always have. It doesn’t really matter if it goes mainstream or not.

It doesn’t really matter if it goes mainstream or not.

It does matter for it to be environmentally sustainable

What’s happening currently is a bubble that’s not in any way sustainable. And energy prices going through the roof thanks to the war could even be the catalyst that pops it. But as I noted earlier, we’ve gone through this cycle many times in tech world. New tech often requires a ton of resources to run which creates the mainframe era, then it gets optimized overtime, and moves to edge devices. I don’t see anything special about LLMs here. We’re just in very early stages of new technology.

There’s a competitive advantage to squeezing more compute out of the same GPU cluster with software optimizations that indirectly favors local models. It just depends on whether the optimization work can proceed fast enough to make the DC expansion approach obsolete (or at have a less profitable ROI).