deleted by creator

P=0.05?

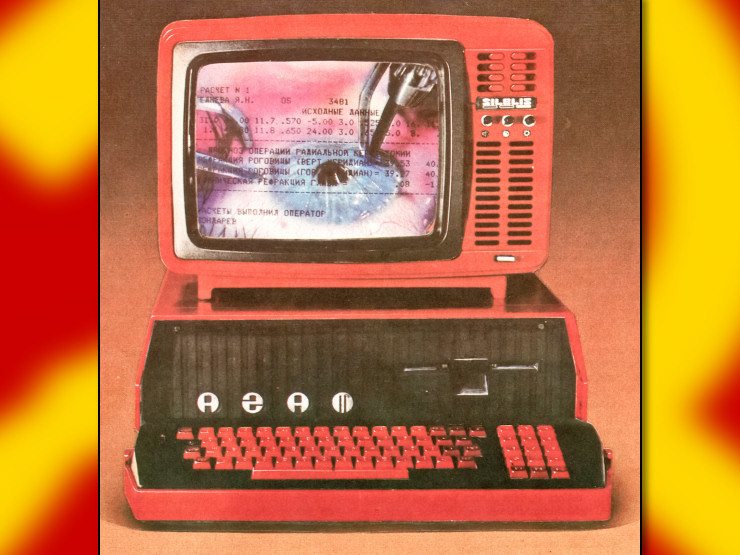

cheaply and less powerful

So AI could be more sustainable this whole time? Interesting…

Who wants to bet their AI will be used for more useful things too?

AI, as in machine learning, has always had uses. Like, real useful additions to human capability. It’s very useful in pattern recognition and statistical analysis of various things.

Our more modern shit connotation comes from capitalists trying replace labor with generative AI, which is a small subset of potential machine learning uses, but by its nature sends massive amounts of shlock at us.

ML has made our xsh translations coherent.

Having dealt with ML engineers in depth before, American tech companies tend to throw blank checks their way which, combined with them not tending to have backgrounds in optimization or infrastructure, means they spin up 8 billion GPU instances in the cloud and use 10% of them ever because engineers are lazy.

They could, without any exaggeration, reduce their energy consumption by a factor of ten with about two weeks of honest engineering work. Yes this bothers the fuck out of me.

That’s my biggest gripe with mainstream closed source AI, they can optimize some of their most powerful MLAs to run on a potato but… they don’t. And they’ll never open source becaue it’d be forked by people who are genuinely passionate about improvement.

AKA they’d be run outta business in no time.

Who wants to bet their AI will be used for more useful things too?

Unlike in the West where if Nvidia tanks then the entire US economy goes down with it.

Is it actually “sustainable” though? I’d like to see some wattage numbers.

I said this in the other thread but I’ll say it again: our sophisticated artificial intelligence, their planet burning chatbot.

Idk about sustainability. It still requires massive computing infrastructure, which for now requires unsustainable ways of sourcing metals

Removed by mod

rare earth mining

Removed by mod

So AI could be more sustainable this whole time? Interesting…

Yeah a lot of the breakthrough research has happened via cheaper / resource limited methods now that the cat is out of the bag. We live in interesting times.

Removed by mod

source: ML guy i know so this could be entirely unsubstantiated but apparently the main environmental burden of LLM infrastructure comes from training new models not serving inference from already deployed models.

That might change now that companies are creating “reasoning” models like DeepSeek R1. They aren’t really all that different architecturally but they produce longer outputs which just requires more compute.

Man, at this rate, China is going to somehow create a cryptocurrency or NFT that actually works and isn’t a scam.

BRICScoin when

Well they are looking to create an alternative currency to USD for global trade.

An incredible outcome would be if the US stock market bubble pops because Chinese developed open-source AI that can run locally on your phone end up being about as good as Silicon Valley’s stuff.

I think the bubble might not pop so easily. Even if Microsoft is set back dramatically by this, investors have nowhere else to go. The whole industry is in a turmoil, and since there’s nothing else to invest into, stocks stay high.

At least that’s how i explain the ludicrously high stock rates that we’re seeing in the recent years.

Llms that run locally are already a thing, and I wager that one of those smaller models can do 99% of anything anyone would want.

What does it mean for an llm to run locally? Where’s all the data with the ‘answers’ stored?

In the weights

Imagine if an idea was a point on a graph, ideas that are similar would have points closer to each other, and points that are very different would be very far away. A llm is a predictive model for this graph, just like a line of best fit is a predictive model for a simple linear graph. So in a way, the model is predicting the information, it’s not stored directly or searched for.

A locally running llm is just one of these models shrunk down and executing on your computer.

Edit: removed a point about embeddings that wasnt fully accurate

Thanks. That helps me understand things better. I’m guessing you need all the data initially to set up the graph (model). Then you only need that?

Yep, exactly. Every llm has a ‘cut off date’ which is the last day that the data used to make the model was updated.

How big are the files for the finished model, do you know?

That’s a great question! The models come in different sizes, where one ‘foundational’ model is trained, and that is used to train smaller models. US companies generally do not release the foundational models (I think) but meta, Microsoft, deepseek, and a few others will release smaller ones available on ollama.com. A rule of thumb is that 1 billion parameters is about 1 gigabyte. The foundational models are hundreds of billions if not trillions of parameters, but you can get a good model that is 7-8 billion parameters, small enough to run on a gaming gpu.

I’ve been messing around with deepseek, and I can already tell it’s much smoother and more coherent than chatgpt or gemini.

It also doesn’t have the limiters of the American LLMs, where they accidentally generate a true statement about history or politics that doesn’t show the US in a good light, and then have to stop and argue themselves back into the neoliberal / US state department position.

Removed by mod

I was mostly looking to see if it would answer questions without huge HR style disclaimers , but yeah , it doesn’t like to generate responses on current world leaders

Deepseek made a mistake with the first query I asked it, so from that sample of 1 I’m treating it with the same caution as any of the current LLMs.

I asked it about testing an electronics part (an Integrated Circuit chip) and it confidently told me how to test an imaginary 16-pin version of the chip.

The IC in question has 8 pins.

When I followed up by asking “why pin 16” it confidently responded with a little lecture about what pin 16 does and just how important pin 16 is.

Once I’d proved to it that the IC has 8 pins, I got this:

“You’re absolutely correct that the MN3101 is an 8-pin DIP (Dual Inline Package) chip. My earlier reference to pin 16 was incorrect, and I appreciate your clarification. Let me provide accurate information for the MN3101 (8-pin DIP).”

The thing is that these chips have a unique Id (the name) and publicly available datasheets that explain, amongst other things, how many pins they have.

You can try the “deepthink” option or add the search option next time. Those options will eat up a lot of the context window but you’ll get much better results for technical questions. It’s still all just LLMs though so caution is warranted.

deleted by creator

tried this now and it just generated a biography titled “Chinese Revolutionary Leader”

This is about to make me go

AI

AI, China

china is reaching levels of basedness long thought impossible

AI garbage

AI garbage AI garbage, China

AI garbage, Chinathis but unironically

是

是

Why is it based when China does it? It’s still bazinga until I hear otherwise, glad they’re owning the Yanks tho

It may eventually be useful and because they’re destroying OpenAI and all the other bullshit it’s worth it anyway

The US Govt. is pumping billions into this shit industry. It will be so funny when it bursts

It’s not going away. Probably every. So might as well be happier when China does better with it

I know this shit keeping em awake because mandem talking about 500 billion investment while they’re ringing the alarm on an open source AI

___

___

Space race all over again

China’s beating the US at the actual second space race too

proof? I’m not a skeptic, just a lore enthusiast.

Their space station is seriously impressive, and that’s just one piece of their space program.

They have a microwave oven on the Tiangong, which is a luxury (in space). On the ISS they use a heating pad to warm their food, because the station doesn’t have enough wattage for a microwave.

Lmao they don’t have batteries on the ISS?

No Prime shipping. 🫤

Edit (serious): Maybe they have enough capacitors, but not enough amps(?) to go around? Like, not enough solar panels.

IDK I’m bad at electricity and magnetism.

I found a YouTube link in your comment. Here are links to the same video on alternative frontends that protect your privacy:

China has its own space station, and it’s newer and more advanced than the ISS.

The last time the US had an operational space station all of its own was 1979.

Edit: I see nasezero had already mentioned it, heh.

Edit2: Fuuuuuu—I’m about to start up Kerbal Space Program now.

The last time NASA brought samples back from the moon was 1972, China just brought their second batch back last year.

China is cratering the cost of Moon rocks. But at what cost?

Also, China’s moon rocks are extra special because they’re from the far side, where NASA never brought any rocks from

Ronks.

To add: They have an actual plan on manned landings on the moon within the next 5 years (possibly within 3).

And its really funny to see all “Successes” down the list of these while SpaceSux has a worse record then the average Kerbal.

https://en.wikipedia.org/wiki/Chinese_Lunar_Exploration_Program“Gamer” musk piloting his rockets manually while a helpless and horrified staff look on…

Musk being given a wireless keyboard without batteries and being TOLD by his staff he is piloting the rocket.

“Here you go little buddy”

Here little bro, this is your controller

Musk being handed the

while we actually pilot his sub into the Marianas trench

while we actually pilot his sub into the Marianas trench

There are good reasons to think that China will get humans back on the moon before the US despite the claimed agenda of NASA.

The low budget of NASA, the insistence of the US state to use recycled space shuttle techs and factories and the insistence on having private corporations handle critical part of the moon missions, namely the moon lander(s); have put the project in a situation in which the constant shifts from the private contractors and politicians and the general mess that is the project makes NASA unable to come up with an actual, precise plan about how exactly they’re actually going to land peoples on the moon in practice, but at the same time they are under pressure from the state and private contractors to present something so they keep giving broad hand-wavy “plans” to pretend they know what they are doing.

Chinese flag on Mars first.

China is focusing on developing infrastructure for a permanent and useful presence on the Moon first.

Space race but instead of human achievement it’s who can generate the most slop possible.

And China is winning with only one hand. The other is building high speed rail

mashallah xi ai can continue to lead china prosperously long after xi is gone

computer, write me a happy song that references China having many sputnik moments and scaring the shit out of western chauvinists

“B-but the tech sanctions were supposed to protect our treat machine scam?! I don’t understand!”

I feel like they could have picked a better name than deepseek

seepdeek?

I dunno. What about one of eight immortals that would astrally project in his sleep to learn secrets of thr universe. That’s be chill. Li Tieguai I think just really scare the west with it

Seekdeek

It was spun off from a hedge fund, so the name reflects that.

I downloaded DeepSeekv3 yesterday but I dunno how to actually run it. I gotta see wtf to do this weekend and see what all the fuss is about.

With ollama and openwebui you can get a pretty featured experience.

There’s a web client too if you want to just give it a test spin

There’s an official Android app in the Google Play store. Search for Deepseek. You just have to register an account, then you’re at the races

Time to move those goalposts again, posters. Please reassure me that these models will in fact still destroy all life on earth because your google image searches got worse.

Oh you can be sure that healthy global competition (fuck I can’t tell what level of irony I’m on at this point) will increase overall energy expenditure on the slop remixing machines.

Won’t someone think of the ocean boiling treat printer slop redditor waifu bazinga coomer plagiarism treats?!

Evidently we stay thinking about them. It’s getting harder and harder to ignore. :(