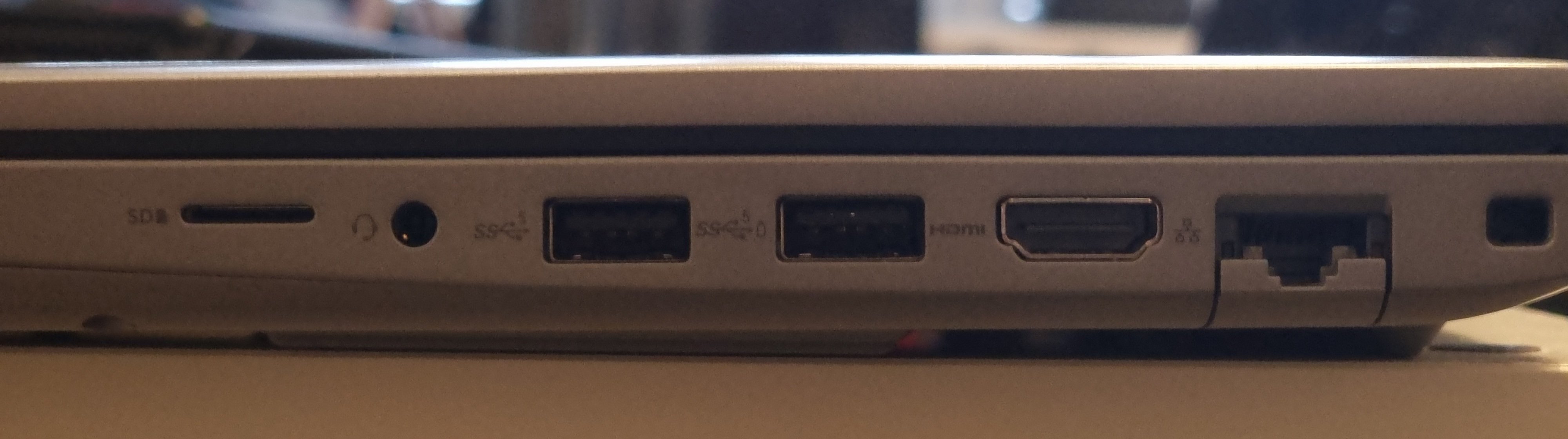

This is my ~8 month old work laptop.

Is a Dell.

2 usb c not pictured.

You have options.

As long as you’re not an apple cult member you do.

Apple brought back the mag charger.

I wish it still had the SD reader and one A port, but it doesn’t really come up that often. Just 3D printing and only because I’m too lazy to set up a octoprint server or whatever.

MBPs all have HDMI and SD slots… but Definitely set up the octopi with a cheap webcam. I’ve run one for years now and it’s so nice to be able to kick off and check on prints from my phone. Not to mention it doesn’t matter what computer I slice on and the files are small enough that I have gcode for almost everything I’ve printed for instant access to reprint whenever.

An octopi is a fun project, for mine I printed a new internal enclosure for the mainboard that has mounts for the pi, so the printer is completely integrated with it (never did finish setting up the internal power routing to power it directly off the power supply, but that’s also completely doable)

I purposely don’t do the printer PS powers the octopi thing… I like to be able to drop some gcode on it for later or do updates when the printer isn’t on.

they do have SDXC card readers:

2024 16" macbook pro: https://support.apple.com/en-ca/121554

- Charging and Expansion

- SDXC card slot

- HDMI port

- 3.5 mm headphone jack

- MagSafe 3 port

- Three Thunderbolt 5 (USB-C) ports with support for:

- Charging

- DisplayPort

- Thunderbolt 5 (up to 120Gb/s)

- Thunderbolt 4 (up to 40Gb/s)

- USB 4 (up to 40Gb/s)

Ahh that’s nice, I bought the 2015 right after the Touch Bar pros went in sale because of the “you only need USB c now” ethos.

I later inherited a Touch Bar MacBook Pro, and it has frequent charging problems with USB C.

It’s gonna be time for an upgrade in a couple more years, and it’s nice to know that the new MBPs are sane again.

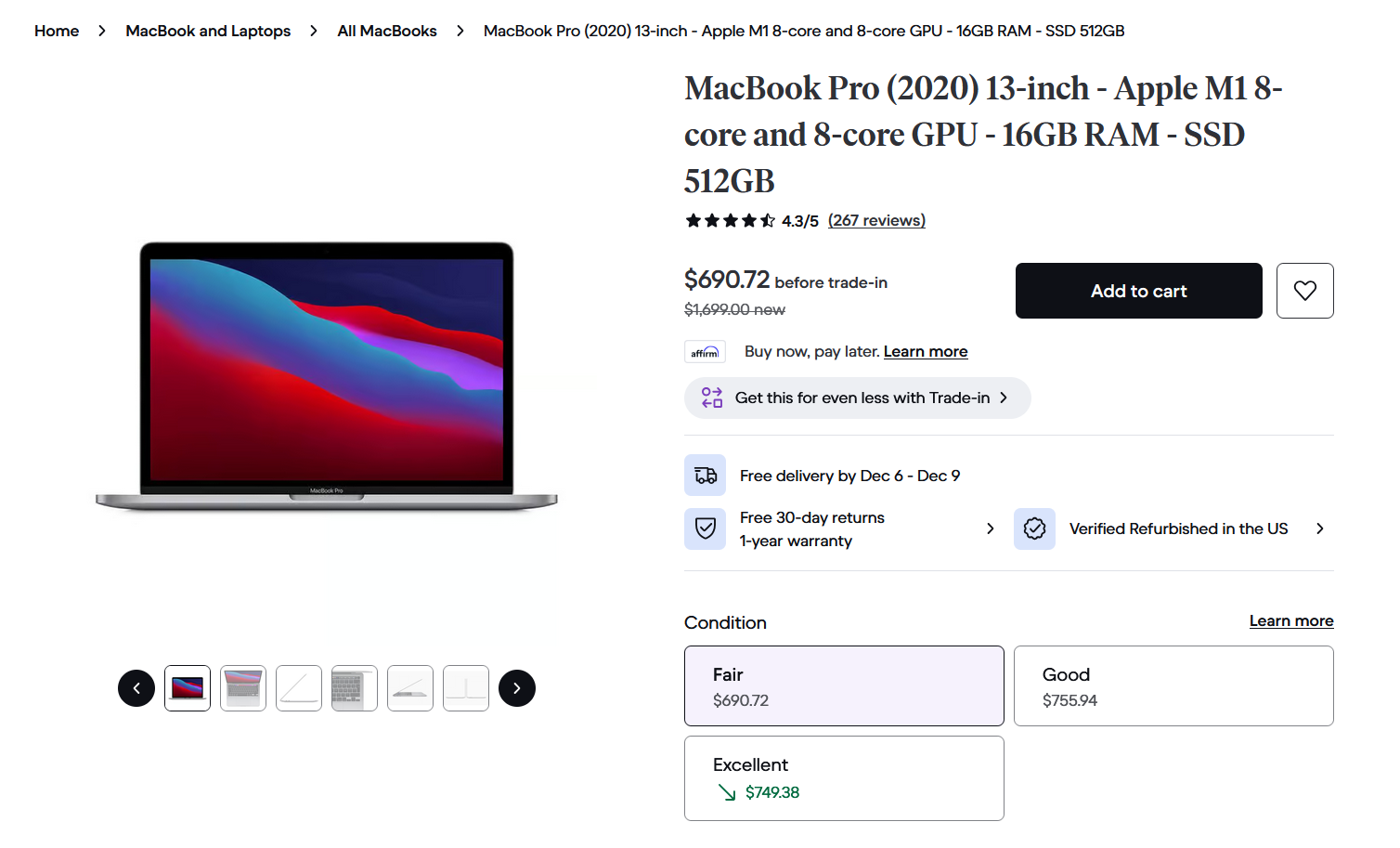

I was recently convinced that the M1 MBP is one of the cheapest and most cost effective laptops on the market right now. I know it sounds crazy but it appears to be true. You can get a m1 mbp refurbished (sometimes with warranty) for anywhere between $400 - $700. Making it a budget laptop. It also destroys anything in that price range in terms of performance and what you are getting.

We bought ours when it first came out after several terrible windows laptops. It still runs like new and there’s hasn’t been any need to consider upgrading (m1 air in our case). The biggest complaint is once or twice a year I need a usb c to an adapter for an old device or something.

I’m not in the Apple ecosystem but I have a 16" 32GB M1 MBP. It was given to me when I started my job as my work machine and the thing is a beast especially comparing it to all the terrible laptops Apple came out with prior (removal of mag safe, addition of touch bar, the keyboard issues). I still use that laptop for work today and it honestly doesn’t even feel like it’s aged a day. Everything is still extremely fast and I use my work laptop 8 hours a day for extremely demanding tasks (I’m a dev so things like running dozens of docker containers, compilation, Android emulators, multiple IDEs, etc).

Honestly agreed. For the majority of users that just do light office work and browsing it is a great piece of technology. Although i would say it is less about performance (because those people would be fine with even less) and more about build quality, battery life, fanless design and good screen.

The one issue i have with it is the 256gb non-removable storage. More actually than the 8gb RAM, which tbh for many people is enough for casual use.

I am still waiting for anyone not named apple to release a similarly priced fanless laptop with good build quality. With lunar lake it should finally be possible imo.

If you spend a little more (like $700) you can get 16gb ram and 512gb. For performance I think “light office work” is selling it short. It’s more than capable of handling heavy office work IMO.

Link for the sales or it didn’t happen.

Thanks for the link, I thought refurbished meant it would have warranty. Cool price if you’re on a pinch although personally I would not gamble on it without a warranty.

Yeah, I guess it depends on what kind of work. I thought that for demanding office stuff the 8gb RAM might end up mattering after all.

But your $700 with warranty are an amazing deal that make this irrelevant. That really only leaves the single external monitor (without using workarounds) as downside.

Where I am in Europe however I don’t think I could find the better specced models anywhere close to that price

It’s beautiful.

While I personally prefer this, I’m going to guess that the majority of people are generally not going to be using more than 2 or three usb ports at once. My take is that for most people, 2 Cs, an A, DP or HDMI would be optimal.

The availability of BT and wifi peripherals make this acceptable for many.

I still have a cutting plotter that uses RS232, but that’s connected to an oldish desktop, on the network, so a laptop never gets connected physically.

I’m not saying that this is good, simply that this is probably acceptable for many.

I have the same mac pictured above, and also a windows laptop with many ports.

The mac I plug into my work center via a single usb-c connection which charges it, connects it to my external monitor, and connects it to all of my USB equipment (about 6 items ranging from m&k to music equipment). Having only the one wire is huge in terms of making it easier to break down the machine from its setup and pack it up for the road.

The pc is connected separately to power as it can’t be powered through the usb-c, and to the monitor separately for some esoteric reason. So then I need a third cable to connect it to my equipment.

So in my case the less-is-more approach is actually preferable

that all being said

I’m sure other windows laptops can be configured with a one-wire solution just fine. And I don’t mean to pretend the 2x usb-c config was a popular choice or anything. Only on like two models or something had it. The newer macbooks brought back sd card slots and hdmi and everything by popular demand.

I looked into it and you can still run everything off of just one usb-c on those ones, so at the end of the day more options is just better for more people

The mac I plug into my work center via a single usb-c connection which charges it, connects it to my external monitor, and connects it to all of my USB equipment

I do this with my Dell, which also has many ports ¯\_(ツ)_/¯

Thank you for proving me correct!

Was just using a new ROG something something laptop for a job. The power connector is some little rectangle thing and it almost fit in a USBc. I was surprised when it was unique. 1 wire aint happening on that.

Yep. My work laptop:

Haha I have almost exactly the same one. Probably a slightly older model. Works for most stuff but mine only has 8GB RAM which is a bit of a killer…

It’s most likely expandable, have you checked?

It’s a work laptop, not really my place to go fiddling with it, unfortunately

Dell makes some fantastic enterprise laptops

My 4 month old laptop has hdmi on the back, ethernet on the left, four usb 3.whatever slots with two on each side, two USB c slots on the right side, and a microsd slot.

I think it even has a 3.5mm headset jack but I’d have to get out of bed to check. I don’t have any peripherals that use 3.5mm anymore though so it’s just a nice little bonus.

What model of Dell is that?

Precision 3581

You have options.

I don’t. We have standardized on Macbook Pros at work because otherwise we’d have to use the company-issued image, which really sucks for development work (multi-day turnaround to get anything approved).

I’m interested in replacing my current laptop (E495 Thinkpad), and it’s really hard to find anything sensible w/ an RJ-45 port, especially one w/ decent Linux support. I want something in a similar form factor (14", or 16" if the bezels are really thin), but with updated internals (nothing fancy, but the 3500U is getting a bit slow for casual gaming).

I’ve been thinking of a Framework laptop, but the RJ-45 port is wack, only having 4 ports kind of sucks (they could have better density with those ports), and it doesn’t have the Trackpoint that I like so much about my Thinkpad. We’ll see what I end up with when I actually buy one though, but maybe I’ll have to take another look at Dell’s professional line.

anything decent with an RJ-45 port

Not sure if the current generation still has it, but work issued us techs with ThinkPad L14 Gen 3 laptops and I’ve been happy with it as a work device. It has an RJ-45 (was considered a requirement when they procured the laptops for techs) and mine has a Ryzen 5 Pro 5675U. Only complaints I would have for it is soldered USB-C connectors (which double as the only power source for the machine) and keyboard isn’t as nice as my personal T480 although definitely still fine.

I would caution against the 12th gen Intel i7 ThinkPads, we’ve had multiple internally have overheating issues or stuck in connected standby. My colleague wishes he never replaced his original work issue (same as mine).

The E14 and T14 still have them as well, and that’s what I’m interested in. I used to buy T-series, but they started soldering the RAM, so I switched to E-series for my last one. I don’t know if they solder RAM on the E14 though, they probably do.

I really miss my T440, which had a fantastic keyboard, but my E495 is still better than my Macbook Pro (hate that keyboard) and pretty much every other laptop I’ve used. Not sure how the newer Thinkpads are, but I definitely don’t want those ultra-thin keyboards so many vendors are going with.

And yeah, I’ll probably go AMD again, I want the APU perf and don’t want a dGPU.

The apple bois wont appreciate this

Look at all those ports I’ll never need

We should have had USBC 20 years ago.

It’s still cool to have options

That’s what prisoners say. You are so conditioned to it that you prefer it.

You should probably look in a mirror, Mr. Prisoner.

You’re the one asking to be constrained.

Your other comments are less nonsensical, so I’ll only focus on this one.

- Prisoners don’t say this

- More options is freedom, literally the exact opposite of being imprisoned

- Recognizing reality is conditioning…?

- You can’t just say the opposite of something factual is true, that’s what MAGAts do

- Oh

My point was that defending the pissing contest over standards that gave us consumers six ports instead of one to do all the same tasks really misses the mark, imo.

Not really, i don’t use usb-c for everything cause for me it gives no advantage. Like my LAN cable still works, my aux port is up-to my satisfaction, my DP port is straightforward.

Why should i go to USB-C if everything works? I’m not Anti Type-c but I’m also not Pro type-c, if that makes sense. I’ll use it if I’m missing on some new tech.

That’s the way to do it. I just wish Framework had a better selection of modules available and had more module bays on their laptops.

Is a dongle that doesn’t dangle even really a dongle at all?

Doubtful.

no body shaming please

What module would you like to have.

I would like RS-232 and RS-485 modules and a full size SD card reader would be nice too. It’s probably something I would end up building myself if I get a Framework laptop.

Edit: It looks like they have an SD card module now, nice.

I 3d printed a dongle that has a Logitech receiver in it. All their design files are online, so you can make your own pretty easily.

Oh, damn, that’s a game changing idea there.

What would you do with RS-232 and RS-485?

Time travel

Hook up my US Robotics 56k modem and dial up to the internet, where I can chat with hot babes

404

Hot babes not found

After your training to become a cage fighter, I presume?

I have a 485 adapter in my bag for BACnet and Modbus communications.

And what the hell, add RS-422 while you’re at it. And a parallel port! And the left side expansion port they used to have on the Amiga 500 and 1000!

And some ISDN BRI ports. And ATM and FDDI. And something I can do X.25 over. Oh, and Token Ring.

You’d be surprised to know how much of the world’s manufacturing infrastructure still uses it.

-485 is superior. Everyone knows it

UART consoles and model train control systems come to mind.

I just wish the existing dongles had a bit more density to them. That’s a lot of space for a micro-SD slot, they totally could have fit a full SD card in there as well, and perhaps even a micro-USB or headphone jack.

I like being able to swap them, but each USB-C port can handle a lot more than a single-use dongle.

Right?! If you’re going through the all trouble of mass producing the modules, etc., make them worth it. As it is, it’s a bunch of expensive squares.

Why are the modules so wide?

I guess they have to be the same, so they all have to be the maximum width of anything you might want to put in there.

That’s hot af

In case you’re not aware, that’s a Framework laptop.

One more reason for me to get one. Dammit.

Is it still owned by LTT? I don’t particularly like this LTT though.

Owned? The kid just bought stock.

Yup. If you limited your purchases to companies not partly owned by people you don’t like, you couldn’t buy from any public company and would have a very small selection from private companies.

Buy products that make sense, screw whoever else invests in it.

I didn’t knew. I thought it was co founded by him or something.

Nowhere near owned. LTT made a small investment.

Okay good.

I’ll be in my bunk.

I will always upvote a relevant Firefly reference.

yea this is the way. is only they had more high end components

Framework users: “Yeah, but my USB-C ports are recessed!”

Eh, I’d much rather have a USB-C dongle built-in to the laptop than in a separate bag that I’m definitely going to lose.

That also means we can still use the expansion cards for the Framework in any other device that also has a USB-C port. Need an SD card reader or a 2.5Gb LAN adapter? Not a problem, I’ll just grab one from my laptop.

Love mine.

ok but where’s the pcmcia slot! /sees myself out

It’s SDCARD since like 1999. Sheesh, get current mate! 🤣

I have a framework, and while this system is pretty cool, I don’t change the cards often and I only have 4 cards. I’d rather have some more built-in ports too.

I don’t change them ever. But I have the exact set of ports I need now

That’s one of the cool things about the framework, though, just the fact that you can, because I swap my ports all the time. I use it to game on my big TV at home, but I almost never need an HDMI port on the go, so I pop it out and pop in another USB-C or something.

Framework baby!!!

Oh my god

This is the way

still only 4 ports thou

What a waste of chassis space.

Yeah, I wish they had 2 dedicated USB-C ports (one on each side) and had the four swappable bays. The RJ-45 port also look really dumb, I think they could have done something a bit more clever there.

I dunno - I’m pretty sure I’d choose the modern MacBook Pro’s ports over any of these other options.

We’re mindlessly bashing Apple here, we don’t need your sensible reasoning!

From my personal experience Apple products aren’t as great as the fanboys claim but are far far better than they haters say they are.

Where do you see Apple bashing? Most comments are about the general state of notebook ports.

Continue bashing, they use apple maths and only have ports on expensive models.

That picture is from the tech specs page of the base 14-inch

If you got that kind of money to spend on a laptop, sure. I really don’t.

Edit: to be clear, I know this is a stack of Mac’s in OPs picture, but the development that the entry models have basically no ports at all is a more recent development. Having to pick the pro just to be able to connect your stuff without dongles or hubs is a bit insane considering the price (and price difference).

It really depends on what you use your laptop for. My 2013 MBP lasted 9 years and was how I got my work done. That comes out to 76¢ per day, and I make a fair bit more than that per hour.

But if you’re looking for a personal computer to surf the internet, yes, that could be cost prohibitive. But then it also matters less what device you buy.

As for ports, I’ve never needed a dongle on the 2013 model. I did need one for a USB A drive on the newest model, but this little thing has solved that problem easily. I didn’t even have to buy that since my monitor has USB A ports – I was just too lazy to reach around the back to use it every time. I’m not sure I understand all the complaints about the occasional need for a dongle.

I have an M2 Air, and all mine is missing from that is the SDXC slot, third TB4 and HDMI, and honestly, it’s fine. A third TB4/USB would be nice for when I’m doing my radio show and have to plug in my controller and mic while also charing my phone, but I already have a hub so it doesn’t bother me.

That said, the limited ports on my M1 mini are quite problematic. Two TB3/USB and two USB3, but one of them is lost to a DisplayPort cable for my second monitor. So I have a desktop computer that functionally has three USB sockets, which ain’t great. But again, I have a hub, so it’s not a huge problem.

An ethernet port is essential for any computer.

Exactly! What are you going to do if your router dies (or you mess something up fiddling w/ things)? I may only need it once/year or so, but when I do, it’s really important and I most likely can’t find the dongle.

An RJ-45 port could totally fit on there if they used one of those flip-down things that Dell has on their professional line.

I just this … https://a.co/d/ijxaPae

Only issue I have is max 65W PD, which should be fine for most laptops, but some laptops can charge at 100W.

It’s really not. I have one on my work laptop and have never plugged an Ethernet jack into it. That stays permanently in my dock and gets transferred to the laptop via USB-C. All other non-desk work is done via … WiFi. Shock! Literally can’t tell the difference when making money.

Power, HDMI, a few USBs, and headphones, all you’ll ever likely need.

There’s no doubt a dongle for anything else.

Yes, and it’s better to be downgrading USB-C ports with adapters than to be stuck adapting a USB-A port to USB-C or ethernet.

SD card reader is nice to have if you fuck around with cameras and microphones.

Yeah, that can stay too.

Cause I live toting a do gle around and risk breaking the laptop because of it.

I did enough of that in the 90’s, TYVM

in the ’90s*

Username checks out.

Cause I live toting a do gle around and risk breaking the laptop because of it.

I did enough of that in the 90’s, TYVM

in the ’90s*

Unless you want a desk setup. I have 2 monitors, kb, mouse, external dac, usb extension for thumbdrives, ethernet, usb soundcard for my mic and a kvm. That’s dp, hdmi, 6 usb-a, ethernet and I still sometimes plug-in 1-3 devices to charge them.

With that many connections, using a dock or a monitor with thunderbolt seems more practical than having a ton of stuff plugged into your laptop.

It’s not about it being practical. It’s about if it’s actually doable or not and how well it would work. Having the native ports will always be better that using a hub/dock.

Strongly disagree. I use a laptop with a thunderbolt dock. Being able to plug in a single cable to provide power, connect my monitor, all of my input devices, Ethernet, and anything else in a single cable is awesome. If I had to plug 10 things in manually it would be quite cumbersome. I disconnect the laptop daily as I bring it between work and home, as well as use it, well, as a portable laptop.

Kudos to you.

What you could do now is step out of your bubble and consider that other people have different use cases and might need or prefer to have more native ports.

You literally lose nothing by having more connectivity options.

Except the device inevitably ends up bigger and chunkier.

Yeah, because plugging in one thing is way harder than plugging in six.

This is a classic use case for a laptop dock.

That’s a very lazy, short-sighted and first world problem way of looking at this issue.

Why would having the option of using either a hub or plugging things on separately be worse than only being able to use a hub?

Because I don’t want a chonky boi laptop to carry around.

It sounds like you need a desktop computer or a docking station.

That’s a use case for a laptop dock if ever there was one.

Like I already said to another user: No. There are more than a few use cases that require a mobile set up for demos for example but that you’d also want to use in a desk setting. For example, architects or sw dev.

Why are you making an effort to justify getting shafted by corporations?

We aren’t justifying getting shafted by corporations. What I and the other person are saying is that at some point as your connections and cables multiply, you need to consolidate and streamline your setup for it to be more practical and actually mobile. I’m all for having all the basic necessity ports on my laptop, but when your desk ends up as a mess of cables and pulling out and putting back your laptop becomes bothersome with having to attach/re-attach everything every time, having a dock makes it much simpler. Subjecting yourself to setting up all those cables on both ends instead of just one end is the opposite of having a mobile workstation for quick setup and cleanup.

You’re still missing the forest for the trees.

There’s no real reason why you’d have to choose having a few ports + a hub or tons of ports + the option of using a hub.

If you prefer to “consolidate” your devices to a single poinf of failure on an external device then by all means, go ahead. I just think that it’s pretty crappy that options are being artificially limited and users of all people are making excuses for it.

In this situation a hub is still better. You can pack all the stuff away plugged into the hub for easier set up. If your plugging that all into your laptop, you’ll need to plug it all back in again when you move.

Which might be an issue for you but it’s not for me. Also, I prefer the flexibility to have all of the ports I might need, natively.

Zero USB-A ports? Hell, no…

Yeah, props to Apple for bringing back the card reader and HDMI. When I bought my early 2015 MBP I specifically went with the older model because these ports were removed on the newer one which also came with the shitty butterfly keyboard as well which they’ve also since discontinued.

Yeah M1+ Macs are great. I say this as a diehard Apple hater

Fuck firewire. Glad it’s dead. USB C is the best thing to happen to peripherals since the mouse.

I dont know why this is controversial. I’m way more happy with 4x USB-C, than 5 unique ports, that will likely never be used on a regular basis, even when they were relevant

As long as a computer has 4 usb-c ports, I think you’re covered for everything.

Yes we had more different ports back in the days, but most were never used.

Usb-c is way more practical. Still that implies that you have more than 2 Usb-c ports.

At work both my monitors and networking go through the same port. The monitor also acts as a usb hub.

You can buy an adapter and plug everything in one port.

I love it personally.

I only have one Usb-c port on my Surface Go 1, but it’s linked to my screen with 4 usb-A ports and one more Usb-c port.

Same as you, I feel I have enough, at least when it’s hooked up to the screen.

You can only do that because your monitors are not high resolution and high refresh rate. The data cap for usb-c is not that high.

USB-C is just a connector, but Thunderbolt 5 uses it and for asymmetric uses (e.g. a monitor) it can hit 120Gbps.

Isn’t that going to support most monitors?

Please, list the devices that you know have tb5.

Also, that’s the total bandwidth in a best case scenario. You’re not factoring in that you’ll need to share that with all of the devices in a hub. That’s without mentioning that you need the hub (which also has a cost).

The USB4 protocol can handle 160Gb/s split asymettrically (so, say, 120Gb/s out, 40Gb/s in), wheras the upper limit for DisplayPort’s highest bandwidth mode, Quad UHBR 20, is 80Gb/s in one direction. So you can saturate your DisplayPort 2.0 quad-channel with more than enough bandwidth to power three 10K 60Hz 30-bit (i.e. very high-end) monitors in DSC mode, and still only be using half the bandwidth of USB4, all using a single cable which I can also use to charge my earphones.

Can you break this down?

The 2017 model pictured in this post supported Thunderbolt 3, which was a 40 gbps connection. Supported display modes included up to 4k@120, 2x4k@60, or 5k@60, which was better than the then-standard HDMI 2.0.

What combination of resolution, frame rate, and color depth are you envisioning that having a dock handle a gigabit Ethernet connection, analog audio would require scaling down the display resolution through the same port?

By 2021, the MacBook Pros were supporting TB4, and the spec sheets on third party docking stations were supporting 8k resolutions, even if Macs themselves only supported 6k, or up to 4x4k.

Even if we talk about DisplayPort Alt Mode, a VESA standard developed in 2014, and supported in the 2017 models pictured in this post, that’s just a standard DP connection, which in 2017 supported HDR 5k@60. But didn’t support a whole separate dock with networking and USB ports.

Yeah guys it’s way more practical to carry 11 usb c dongles everywhere you go

I’m no Apple fanboy (never owned a product of theirs and never will) but to be fair, those two USB-C ports can do everything the old, removed ports can do and more. The real crime here is not putting enough of them on the laptop.

Edit: The only port I’ll lament the removal of is the headphone jack. USB-C headphones are rare, adapters get lost, and bluetooth headphones compress the audio and have input lag. Everything else can go, though, and won’t be missed. (Okay fine ethernet can stay too.)

And there’s the soldered RAM and storage, and glued-in or screwed-in battery…

Dude, those two little UBS-C ports do 50x what the ports on the bottom laptop could do

To make our laptops look clean and minimalistic, they made us buy a bunch of dongles and adapters.

Screw it, I’m buying a rugged laptop with the thickness of a desktop PC next

Is this rage bait? Those are different macbooks. I think the bottom ones are pros. My current Pro M2 has HDMI and magsafe. My M1 (Air?) is like the top one, but is not in fact a pro and therefore does not provide as many ports.

It’s a repost of a 6 year old Reddit post.

Yes this is rage bait using an old meme from back when Johnny Ive was working for Apple; the top MacBook is from 2015 and for the last few years they put back MagSafe, HDMI, headphone port, and SD card readers.

Imagine seeing a stack of macbooks and becoming enraged!

The MacBook Pro still doesn’t have USB-A ports. I have an apple silicon model for work and have to use multiple dongles to connect all my peripherals. This is ridiculous for a 2000+ dollar computer.

deleted by creator

I like USB C - mostly. If only Logitech would actually create a tiny usb-c unifying receiver…

It’s insane they haven’t made the bolt adapter a C yet. You can get very cheap and tiny A->C adapters but come on. Plus Logitech uses different adapters for different series’.

I use an MX Master S and a G series clicky keyboard. They use different wireless adapters. I just connect via Bluetooth. Of course they also use different software managers also which is annoying.

I’ve found that while Bluetooth works well enough, my admittedly cheap Bluetooth mouse has an ever so slight lag to it. I only use it when out in the field working but it’s disconcerting to say the least.

I’ve seen the same before as well. Strangely enough though, my newer MX Master 3S at my office seems to jitter less when using Bluetooth compared to my older Master 3 (non-S) at home.

USB-C has been out for 10 years and it’s a huge mess. For some devices it makes sense to switch like an external hard drive, but for things like a wired keyboard, I don’t need to repurchase it for USB-C, that serves no purpose.

Nobody’s telling you to repurchase.

If the keyboard has the cable attached, you can attach a tiny (and extremely cheap) adapter on the end and just leave it there, and if it’s not attached, you can do that or just replace the cable.

Or you could just get one of the many laptops that still have a USB-A port.

As an fyi, the USB 4 spec doesn’t include USB A anymore; only USB C.

deleted by creator

Good.

Apple removing the disgusting pile of shit of a connector without a single redeeming quality was a big part of the fact that cables have C ends instead now.

People still use usb a?

Wired keyboard and mouse, USB sticks/thumb drive, and USB-A to lightning cable. I think I have more USB-A peripherals than USB-C.

I think I have two.

Luckily you can buy several USB-A to USB-C adapters for ~$1 each, instead of demanding manufacturers persist an outdated spec — that’s been superseded for a decade — and creating significantly more e-waste and headaches for everyone in the long run.

I’m glad I can plug in one port and have a dual display setup, all peripherals, speakers, ethernet, charging, etc connected at my desk in one go.

If I want to leave, unplug one thing and I’m good to go.

I’m on the other side wishing peripherals would catch up and all become USB-C already. I’m tired of USB-A.

They remove the extra ports because they take up space in the board.

That aside if you’re buying Mac you took it from yourself. No one made you buy it.

As someone who daily drives a laptop for work and does field work on server facilities, finding a modern replacement that has both a RJ45 port and square USB (USB-A?) ports available on both sides, has been a pain in the hassle.

And I’m not even crying over the loss of VGA any longer. That one I can live without.